Artificial Intelligence (AI) represents a phase of technological transformation that is fundamentally different from any past invention. While previous tools primarily enhanced human physical capabilities, AI competes with a core human attribute: the ability to generate, interpret, and apply knowledge. This makes AI uniquely powerful and potentially transformative.

According to reports published on News360Plus.com, citing foreign news agencies ANI and China Daily, this power gives AI the capacity to profoundly reshape individual identity, economic structures, and social organization. Alongside its vast benefits, AI also poses serious risks, making the need for a comprehensive and globally coordinated governance strategy unavoidable.

A simplistic debate between efficiency and safety is insufficient. AI must be examined holistically, considering its various forms, applications, and future trajectories. Much of the public discourse focuses on Artificial General Intelligence (AGI), a vague concept that promises human-level performance across all cognitive tasks, despite the fact that human intelligence itself is difficult to define precisely.

Achieving human-like intelligence is not merely about outperforming humans in specific tasks. The crucial factor is autonomy—the flexible ability to understand the world, integrate diverse skills, and pursue goals independently. There remains a significant gap between today’s conversational AI systems and truly autonomous systems capable of replacing humans in complex organizational environments.

Examples such as autonomous driving systems, smart grids, smart factories, smart cities, and automated telecommunications networks highlight this challenge. These systems are highly complex, safety-critical, and multi-agent in nature, requiring coordination between individual objectives and collective system goals. Current machine learning capabilities fall far short of reliably supporting such systems.

For future AI systems to be trustworthy, they must demonstrate robust reasoning, comply with technical, legal, and ethical standards, and achieve a level of reliability currently considered extremely difficult. A fundamental limitation lies in the opaque “black box” nature of AI systems, which makes achieving safety assurance nearly impossible using traditional certification methods.

Beyond technical risks, AI introduces significant human and systemic risks. These include misuse, overreliance, regulatory non-compliance, and market-driven prioritization of speed over safety. A particularly underappreciated risk is the transfer of cognitive tasks to machines, which may lead to declining critical thinking, weakened personal responsibility, and intellectual uniformity.

Addressing this complex risk landscape requires a comprehensive, human-centered vision for AI that goes beyond the narrow pursuit of AGI promoted by major technology companies. This vision must honestly assess current limitations, reject the “move fast and break things” mentality, promote global standards, and encourage international cooperation. With its strong industrial base and emerging governance initiatives, China is well positioned to contribute to a safer, more trustworthy, and socially aligned global AI future.

The Woodcutter and the Axe

Long ago, there lived a woodcutter in a small village. He was sincere in his work and very honest. Every day, he set out into

Why Reading More Books Wasn’t Making Me Smarter

I Realized I Had Been Reading Wrong My Entire Life Two years ago, I went on a non-fiction reading spree. You might be familiar with the

Israeli intelligence chief’s brother charged with smuggling cigarettes into Gaza

Israeli prosecutors have charged the brother of the head of the country’s intelligence agency, Shin Bet, with “aiding the enemy in wartime” by allegedly smuggling

Ministry of Defence Jobs 2026 February Apply Online Sub Inspectors, Special Operators, Supervisors & Others

Positions: === BPS-18 === 03 Deputy Director (Vetting) 01 Deputy Director (Signals) === BPS-17 === 16 Assistant Director 01 Assistant Director (Engineering) 03 Assistant

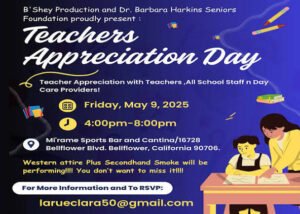

Teacher Appreciation Day 2025

In the United States, National Teacher Appreciation Day 2025 is on Tuesday, May 6, 2025. It is the centerpiece of Teacher Appreciation Week, which runs from May 5 to May

Online Earning Opportunities in Pakistan 2026

LiCrown AI is a web-based digital platform that gained significant attention in early 2026, particularly in Pakistan, as an “AI-powered” online earning or investment system.